Process analytical technology — universally shortened to PAT — is the practice of measuring the chemistry of a process while the process is running, and using those measurements to control quality at the point it is created. The term was popularized by a 2004 U.S. Food and Drug Administration guidance document for pharmaceutical manufacturing, but the underlying idea — that you cannot inspect quality into a finished batch, you can only build it in — is older and applies far beyond pharma.

This article is a primer for engineers and scientists who have heard PAT mentioned in a project meeting and want a working definition before the next conversation.

The one-sentence definition

PAT is a system for designing, analyzing, and controlling manufacturing processes through timely measurement of critical quality and performance attributes of raw materials, in-process materials, and processes, with the goal of ensuring final product quality — wording adapted from the FDA’s 2004 guidance.

Three words in that sentence do most of the work:

- Timely. The measurement happens fast enough to matter for the next decision. For a continuous polymerization, that may mean every few seconds; for a tablet press, every few minutes; for a fermentation, every few hours.

- Critical. Not every parameter matters. A PAT program identifies the small number of attributes that drive quality — the critical quality attributes (CQAs) — and the parameters of the process that move them — the critical process parameters (CPPs).

- Control. Measurement without control is monitoring. PAT presumes that the measurement feeds a decision: an automatic correction, an alarm, a release decision, a hold.

Why the FDA wrote a guidance for it

Before 2004, pharmaceutical manufacturing operated under a regulatory model that was conservative about change. A validated process was, in practice, frozen: any modification — a different probe, a new chemometric model, a tightened temperature setpoint — risked re-validation. The result was a manufacturing base that lagged the chemical industry in process understanding by decades.

The 2004 FDA guidance reframed the goal. Instead of replicating a validated process exactly, manufacturers were encouraged to understand the process well enough to control it within agreed quality boundaries. The vehicle for that understanding was real-time measurement — and the regulator made clear that adopting PAT-style monitoring would not, on its own, trigger new regulatory burden.

The companion frameworks — Quality by Design (QbD), articulated in ICH Q8, and Quality Risk Management in ICH Q9 — formalized the rest. Together, the three documents define the modern regulatory expectation for new pharmaceutical processes.

The four building blocks

PAT in practice comprises four building blocks.

1. Process understanding

A PAT program begins with a model of the process, not a sensor. The model identifies which attributes of the input materials and which process parameters cause variation in the output, and over what range. The artifacts are familiar to anyone who has done design of experiments: cause-and-effect diagrams, risk assessments, statistical screening.

This stage is unglamorous and unavoidable. Buying an inline analyzer before identifying what to measure tends to produce expensive data without decisions.

2. Sensing

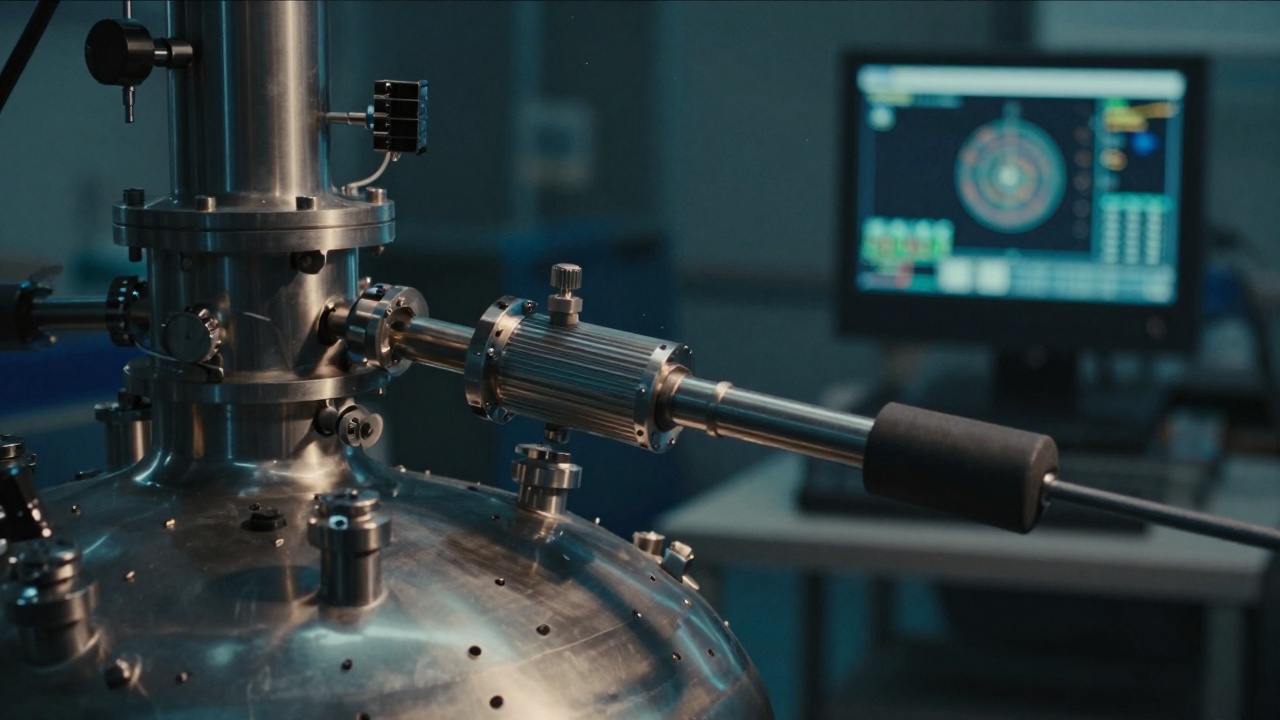

Once the critical attributes are known, the team selects sensors that measure them in the timeframe the process needs.

For chemical composition — the most common PAT use case — vibrational and electronic spectroscopy do most of the work. Near-infrared (NIR) is fast, robust, and inexpensive but less specific. Raman is more chemically specific and works well in aqueous systems where NIR struggles, but is sensitive to fluorescence. Mid-IR (FTIR) excels in solvent monitoring and reaction tracking but typically requires probe contact. UV-Vis covers chromophoric species. Mass spectrometry serves where ultimate specificity is required and the bandwidth is acceptable.

Beyond spectroscopy: focused beam reflectance measurement (FBRM) for particle size, ultrasound for solids concentration, capacitance for cell density in bioreactors, and a long tail of process-specific probes. The right answer is rarely a single technique.

3. Data analysis

Raw spectra are not decisions. The bridge is chemometrics — the application of multivariate statistical methods to spectroscopic data. Partial least squares (PLS) regression is the workhorse: a calibration set links spectra to reference values (titration, HPLC, KF) and a model predicts the reference value from new spectra. Principal component analysis (PCA) detects when a new spectrum looks unlike the calibration set, flagging excursions before they cause off-spec product.

More recent additions — convolutional neural networks for spectral feature extraction, Gaussian process regression for uncertainty estimates — augment but rarely replace the classical methods, which remain easier to validate.

4. Process control

The closed loop is what separates PAT from in-line monitoring. The measurement triggers an action: the dosing pump increases flow, the cooling jacket steps down, the operator interface raises an alarm, the batch is held for sampling. In simple cases the controller is a PID loop; in complex multivariate cases it is a model predictive controller; in pharmaceutical batch release, it can be the conditional release decision itself.

Where PAT shows up beyond pharma

Although the FDA guidance was pharma-specific, the framework migrated outward. Five examples:

- Polymer manufacturing. Inline Raman or NIR on reactors monitors monomer conversion and copolymer composition, allowing endpoint control instead of timed batches.

- Specialty chemicals. Reaction monitoring for endpoint detection, by-product formation, and crystallization control — historically the home of mid-IR probes from companies like Mettler Toledo.

- Food and beverage. NIR on dairy, oil, and grain streams for moisture, fat, and protein content, often replacing offline lab tests that delayed grading decisions by hours.

- Semiconductor process. Optical emission spectroscopy and quadrupole mass spec for plasma process endpoint detection — the principles are PAT, the vocabulary is not.

- Continuous biomanufacturing. Raman, capacitance, and dissolved-gas sensors on perfusion bioreactors, feeding the process model that runs the bioreactor.

The vocabulary shifts — PAT in pharma, process analytics in chemicals, real-time release in biomanufacturing, advanced process control in petrochemicals — but the engineering is the same.

What PAT is not

Three things PAT is not, and that are sometimes confused with it:

- PAT is not a sensor. A Raman probe in a reactor is not, by itself, PAT. It becomes PAT when its output drives a quality decision.

- PAT is not laboratory automation. A lab robot that runs the same titration faster is useful, but offline. PAT measures where the chemistry is, when it is happening.

- PAT is not Industry 4.0. The two ideas overlap — connectivity and data infrastructure are necessary for both — but Industry 4.0 is broader and PAT is older.

Where to start reading

Two documents do most of the foundational work and are still the right starting point twenty years later: the FDA’s 2004 PAT guidance and ICH Q8(R2). The ASTM E2363 standard provides the consensus terminology if you find yourself in conversations where people are using the same words to mean different things — which, in this field, is most conversations.

This article is part of an ongoing series on the methods and instruments of process analytics. For corrections, write to [email protected].